Three graduate students will present their research at the first Beckman Graduate Student Seminar of the fall 2021 semester: Michael Fanous, bioengineering; Nil Parikh, aerospace engineering; and Moitreya Chatterjee, electrical and computer engineering.

The event takes place Wednesday, Sept. 8 at noon. Register to attend on Zoom.

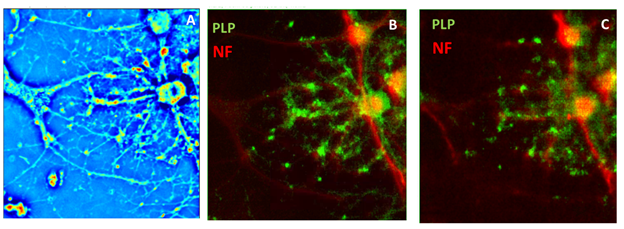

SLIM image of oligodendrocyte on the far right, enveloping the axon of a neuron in the top right corner (A), the corresponding fluorescent channels of PLP and NF (B), and the artificially generated fluorescence from the SLIM frame (C). Image courtesy of Michael Fanous.

SLIM image of oligodendrocyte on the far right, enveloping the axon of a neuron in the top right corner (A), the corresponding fluorescent channels of PLP and NF (B), and the artificially generated fluorescence from the SLIM frame (C). Image courtesy of Michael Fanous.Phase imaging with computational specificity (PICS): principle and applications in biomedicine

Michael Fanous, bioengineering

Methods involving artificial intelligence have become increasingly popular in biomedical research, radically transforming the way various biomarkers are detected and assessed. In this talk, I will present a new technique that combines quantitative phase imaging and AI, termed phase imaging with computational specificity, which provides information about unlabeled live cells with high specificity. Our imaging system allows for automatic training, while inference is built into the acquisition software and runs in real time.

Through supervised machine learning, we have incorporated classification, localization, and segmentation schemes using this novel approach. The result has been an accelerated and highly specific, label-free analysis of biological samples. We have successfully applied this methodology to investigate sub-cellular compartments of spheroids, myelin distributions in brain tissue, white blood cells in blood smears, collagen fibers in breast biopsies, and fluorescent stains in neuron-oligodendrocyte cocultures.

This talk will include a discussion of the PICS method along with a summary of some of the related projects and plans for future use.

Biography

Michael Fanous is a Ph.D. student in bioengineering working in the Quantitative Light Imaging Laboratory under the direction of Gabriel Popescu. His research focuses on combining optical microscopy, interferometry, and phase imaging to revolutionize the understanding of myelin production and provide an ultrasensitive, specific assay for testing drug treatments for diseases such as multiple sclerosis. Recently, he has been incorporating artificial intelligence models with phase modalities, a technique known as phase imaging with computational specificity, to improve the speed and accuracy of his biomedical investigations.

Image courtesy of Nil Parikh.

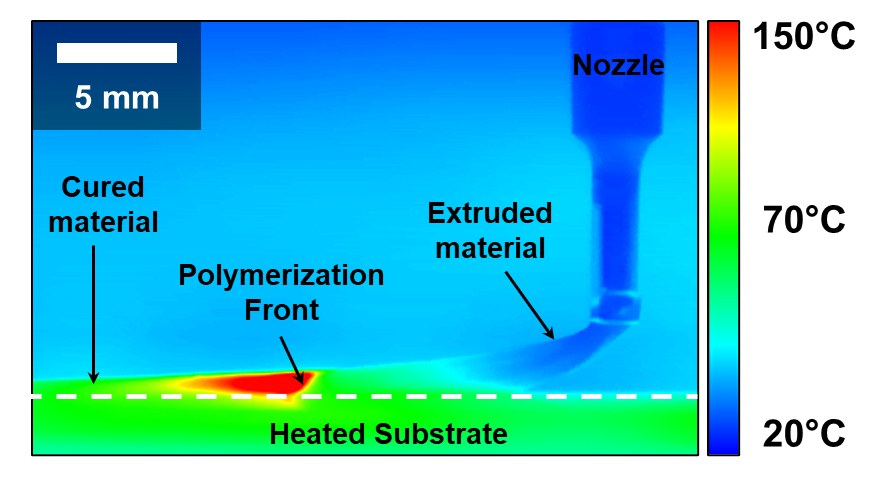

Image courtesy of Nil Parikh.Towards 3D printing of bio-inspired fiber-reinforced composites

Nil Parikh, aerospace engineering

Composites are materials that judiciously combine multiple materials, leveraging the benefits of each constituent. Natural composites leverage a similar scheme employing a combination of soft ("pliable") and hard (“rigid”) constituents into a composite with remarkable control over the spatial distribution and orientation of the reinforcing particulates to build structures with outstanding mechanical and functional performance. Drawing inspiration from these highly evolved hierarchical structures present within natural composites, we explore the development of 3D printing of bio-inspired fiber-reinforced composites. Rapid curing of the deposited material is achieved by integration of frontal polymerization of dicyclopentadiene. 3D printing is a digital process thus we design new architectures drawing inspiration from biology by coupling digital design, computational imaging, and property prediction. Utilizing this combination of manufacturing and design we demonstrate the fabrication of synthetic mimics to natural composites in terms of structure and functionality.

Biography

Nil Parikh is a Ph.D. candidate in aerospace engineering under the direction of Professors Nancy Sottos and Philippe Geubelle. He received his undergraduate degree in aerospace engineering from Wichita State University. Parikh has received the Mavis Future Faculty Fellowship, the American society of Composites Ph.D. Research Scholarship, and the Stillwell Fellowship.

![Graphic reads: Problem definition: Given a video in timeline [0,T], and associated audio in [0, T +T'], generate plausible videos for (T, T+T'].](/images/default-source/news/chatterjee-gsss-image.png?Status=Master&sfvrsn=706da479_1) Image courtesy of Moitreya Chatterjee.

Image courtesy of Moitreya Chatterjee.Sound2Sight: Generating visual dynamics from sound and context

Moitreya Chatterjee, electrical and computer engineering

Learning associations across modalities is critical for robust multimodal reasoning, especially when a modality may be missing during inference. In this paper, we study this problem in the context of audio-conditioned visual synthesis, a task that is important, for example, in occlusion reasoning. Specifically, our goal is to generate future video frames and their motion dynamics conditioned on audio and a few past frames. To tackle this problem, we present Sound2Sight, a deep variational framework, that is trained to learn a per frame stochastic prior conditioned on a joint embedding of audio and past frames. This embedding is learned via a multi-head attention-based audio-visual transformer encoder. The learned prior is then sampled to further condition a video forecasting module to generate future frames. The stochastic prior allows the model to sample multiple plausible futures that are consistent with the provided audio and the past context. Moreover, to improve the quality and coherence of the generated frames, we propose a multimodal discriminator that differentiates between a synthesized and a real audio-visual clip. We empirically evaluate our approach, vis-à-vis closely related prior methods and demonstrate that Sound2Sight significantly outperforms the state of the art in the video generation, while also exhibiting diversity.

Biography

Moitreya Chatterjee is a Ph.D. student in the Department of Electrical and Computer Engineering at the University of Illinois Urbana-Champaign under the supervision of Prof. Narendra Ahuja. His research interests span computer vision and multimodal machine learning. Specifically, he has been focusing on designing generative models for multimodal content and attempting to make them efficient. His research has been published at premier venues of the community, including ECCV, ICCV, and NeurIPS. Chatterjee holds a master's degree from the University of Southern California (USC) and has been conferred with the prestigious Joan and Lalit Bahl Fellowship and the Thomas and Margaret Huang Award for Graduate Research for his academic accomplishments.