Article

Making High Quality Graphene by Engineering Copper Foils

Joshua D. Wood

Graduate Student, Electrical and Computer Engineering

Graphene, a two-dimensional honeycomb arrangement of carbon atoms, is currently a hot topic in the fields of electrical engineering and physics, and its discovery earned physicists Geim and Novoselov a Nobel Prize this year. A technique known as chemical vapor deposition (CVD) allows for large-scale graphene sheets to be grown on a variety of metal surfaces, including nickel, ruthenium, and copper. Of these metals, graphene CVD on copper supposedly self-terminates to a single layer of graphene—the configuration most people want to use for graphene applications. In this growth, however, the copper substrate plays a critical role in the quality of graphene grown.

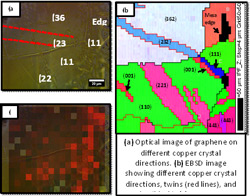

Based on the underlying copper’s crystallographic directions, foil thickness, and surface roughness, the graphene will nucleate in a variety of locations or not at all. This lack of control over the growth interactions demands copper foil engineering, lowering the possibility of polycrystalline graphene films with poorer electronic characteristics. Using electron-backscatter backscatter diffraction (EBSD), Raman spectroscopy, atomic force microscopy (AFM), and scanning electron microscopy (SEM) methods, we confirm the strong interaction with graphene nucleation and the copper. Consequently, we attempt to engineer high quality graphene films by performing long copper anneals and chemical mechanical polishing of the foil.

Comprehension is not the basis for error detection: A conflict-based account of monitoring in speech production

Bonnie Nozari.

Cognitive Science

Although speech errors are common, verbal communication is generally successful because speakers can detect (and correct) their own errors. The standard theory of speech-error detection, the perceptual-loop account, posits that the comprehension system monitors production output for errors. Such a comprehension-based monitor, however, cannot explain the double dissociation between comprehension and error-detection ability observed in the aphasic patients. We propose a new theory of error detection which is, instead, based on the production process itself. We suggest that error detection is accomplished by monitoring conflict in the layers of the production system by a frontal structure, such as the ACC. We implement our theory in the interactive two-step model of word production and use computational simulations to draw precise predictions, which we then test on a sample of aphasic patients. Our results show a strong correlation between error-detection and parameters derived from our production model, and no significant correlation between error-detection and comprehension measures, thus supporting a production-based monitor as the one proposed in this research.

Expanding the Breadth and Detail of Object Recognition Systems

Ian Endres

Graduate Student, Department of Computer Science

While computer vision based object recognition systems have advanced in recent years, they give limited information about each object they recognize, and are guaranteed to fail when presented with a type of object they've never seen before.

In our work, we aim to provide predictions for any object with as much detail as possible. The depth and specificity of a prediction depends on the knowledge available for a given object, and ranges from predicting only the location of some unknown object to specific details about the taxonomic classification, location, layout, and other detailed properties of the object. Along this continuum, our system can also fill in details about types of objects that have never been seen, but belong to a familiar domain, such as when presented with an unfamiliar animal.

We take advantage of a broad range of knowledge about objects, such as general appearance properties common to many objects, the taxonomic structures across objects, and detailed models of the structure of objects themselves. These all combine to provide more robust and flexible object recognition systems, especially when presented with unexpected or unfamiliar objects.

Beckman Institute for Advanced Science and Technology