By

Beckman Institute

Published on

March 31, 2015

Taewoo Kim

Application of Quantitative Phase Imaging (QPI) to Neuroscience

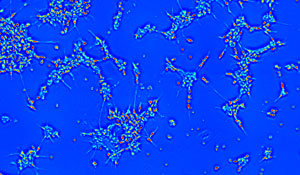

Quantitative Phase Imaging (QPI) has been rapidly growing in its applications to biology. With recent advances in QPI, it is now able to study more complex structures and faster dynamics within biological cells. In this talk, an application of QPI for neuroscience will be demonstrated. QPI provides a valuable tool to study neurons, which are minuscule and highly dynamic structures. Studying the human neuronal network development at a large scale and the neuron filopodia dynamics shows how QPI opens up a new way to study these structures that are small, yet essential to our body functions.

Pooya Khorrami

An Analysis of Unsupervised Learning in Light of Recent Advances

In the last few years, the computer vision community has seen a renewed interest in using deep neural networks for solving machine learning type tasks. One network model in particular, the convolutional neural network (CNN), has demonstrated impressive performance in several computer vision tasks including object recognition, object detection, and scene recognition. A major reason for the success of many CNN-based models is because they were trained using datasets containing more than one million labelled images. These techniques fall into a category of machine learning called supervised learning. Unfortunately, obtaining millions of labelled images can be both very time-consuming and expensive. In this talk, we discuss the benefits of using unlabeled data (also known as unsupervised learning) to initialize the deep neural network model and show that, in certain scenarios, we can obtain superior performance to traditional supervised learning methods. We discover that unsupervised learning, as expected, helps when the ratio of unlabeled to labeled samples is high, and surprisingly, hurts when the ratio is low. We conduct our experiments for the task of object recognition on two accepted benchmarks: the CIFAR-10 and STL-10 datasets.

Tim Mahrt

Perceiving the Information Status of Words in Speech

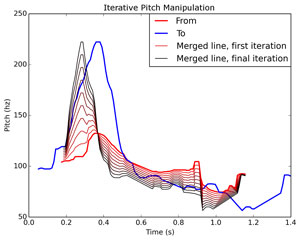

Intonation, pause and word duration, and loudness can be used to convey a wide variety of information—they can be used to convey mood, inform the listener that the speaker is done talking, disambiguate sentence parsing, or highlight the important words in an utterance. In English, the flow of information in an utterance is also regulated through these speech parameters: words that are new or important to the discourse tend to be produced with greater duration, loudness, and higher pitch than words that are already part of the discourse or are less important. If speakers are producing this sort of information then it should be the case that listeners are using these cues to process the information conveyed in an utterance. But what aspects of the acoustics are listeners sensitive to? Through the use of resynthesized utterances, we probe which changes in acoustics lead to changes in perception and find that the information status of the word is primarily cued through pitch. This research contributes to our understanding of language perception and has applications in speech synthesis and language education.